|

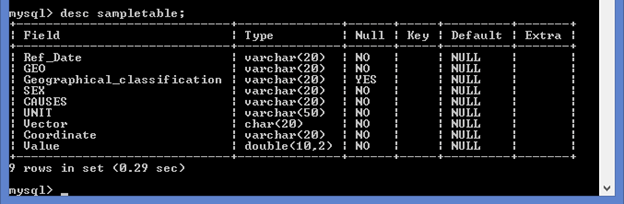

Here its the same as the previous run with no data changes, but it says as Duplicate entry 'Amy Whitaker' for key 'PRIMARY' Insert into `aggregate_table`.Īnd also in one of the Output data messages it says, Output Data (10) 66497 records were written to odbc:DSN=MySQL-DSN UID=root PWD=_EncPwd1_ (aggregate_table). Output Data (10) DataWrap2ODBC::SendBatch: Duplicate entry 'Amy Whitaker' for key 'PRIMARY' Insert into `aggregate_table`(`name`,`no_of_products`) Values (?,?)Īccording to the 'Update Insert if new', it should try to update or insert only if there is new data. But this time it threw an error as follows: To achieve this step, I needed to run the workflow again, I didn't touch the Mysql Products table. Ingest the same file at least 2 times, without truncating the products table. The first run was fine, I was able to insert the names with their count beside.Ħ. The Output data is having 'Update Insert if new' output option for the Aggregated table ('name' as Primary Key). Comparing the CSV and Mysql Products table and finally ingested a single table with 'name' and 'no. Hi applied the Alteryx only solution suggested by you. The Alteryx database (.yxdb) isn't suited to your purposes since it's really just a single highly compressed table that can only be written to and read as a whole so it would fail your criteria 2 and 6. This should maximize available RAM and allow the process to run a quickly as possible.Īs far as other databases, MongoDB, the free edition of SQL Server, etc, they'll all run against the same memory issues. In the meantime, close all other applications, leaving only Alteryx designer open. The long term solution is to get more RAM.

The OS is constantly swapping memory out to disk, which is a very slow operation. Chrome or any MS office application, they will reduces the available memory even more.

If you have any other memory intensive programs open, i.e. The OS takes up about 2GB of this leaving only 2GB to be shared between Alteryx and MySQL. Both Alteryx and MySQL have minimum 4GB specifications (8GB recommended), but you're trying to run both of them on the same machine. The database will then update the main table as quickly as speed issue is probably caused by having only 4GB of RAM on your machine. Use "Delete Data and Append" to dump the data into the temp table. Create a temp table in your database with the same structure as your main table and create an after insert trigger that updates the records in the main table based on the values inserted into the temp one. Only write these changed records back to the database.Ģ. A way to do this is to read all the data from both the csv and the MySQL table and compare all the fields joined on primary key, to find only the records that have changed. Simply passing all the records to the database is extremely slow as you mentioned, so use the speed of the Alteryx engine to your advantage.

You'll have to work within the limitations imposed by "Update: Insert if New" to stop from blocking other applications from accessing the data. I see two potential ways to satisfy all these criteria and still have a workflow that run acceptable fast.ġ. Any other parallel process that tries to access the data in this incomplete table will be blocked by the lack of data. It also violates Point 2, since between the time you delete the data and reinsert all the values, the table will be incomplete. Hi the output method you've selected "Delete Data and Append" violates point 6, since deleting all the data effectively truncates the table.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed